What 5K Support Tickets Taught Me About Fine-Tuning

And where prompting stops working and fine-tuning actually earns its keep

Listen to this post

AI-generated narration of What 5K Support Tickets Taught Me About Fine-Tuning.

At first glance, this is about support tickets.

It's not.

It's about a class of problems that quietly show up everywhere in enterprise systems:

- repetitive decisions

- messy inputs

- and a need for clean, consistent outputs

Support tickets just happen to be one of the clearest places to see it.

And once you see the pattern, you start noticing it everywhere.

The Pattern Most Teams Miss

Across teams and systems, these problems start to look the same:

- classifying inbound requests

- routing work to the right team

- tagging content

- prioritizing issues

- enforcing structured outputs (usually JSON)

Different surface areas. Same shape.

You're not asking the model to be creative. You’re asking it to make the same decision, over and over again, without drifting. That's a very different problem than most people think they're solving.

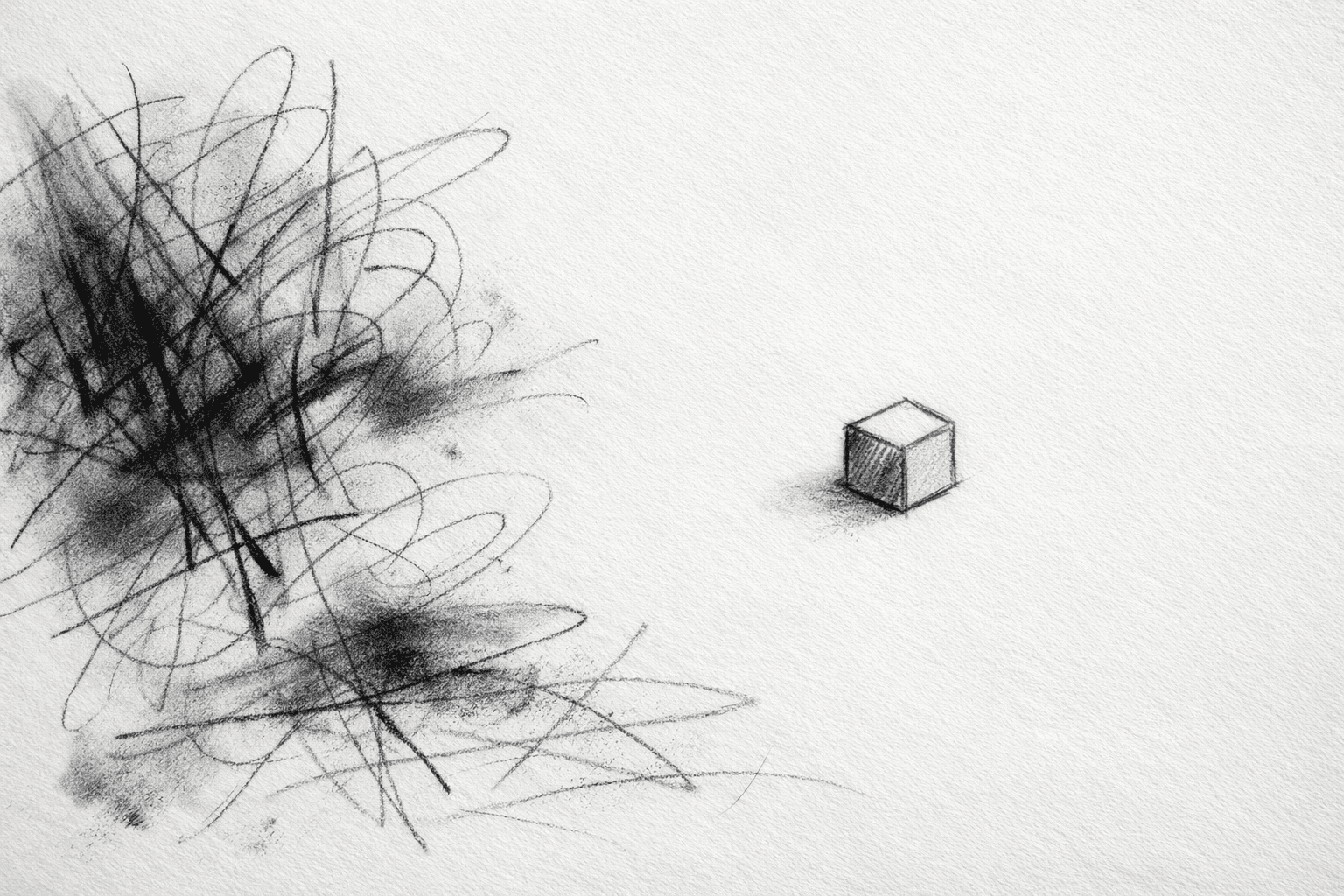

The trap most teams fall into

When people first discover fine-tuning, it's easy to think:

"This is how we make the model smarter."

So they reach for it early. Too early.

In reality, prompting, maybe with a few examples, gets you surprisingly far, far enough that fine-tuning often feels unnecessary.

Until it doesn't.

There's a point where your prompt starts to grow, then grow again, then quietly turns into something fragile. That's usually the signal.

A concrete example: what this looks like in support tickets

Imagine thousands of tickets coming in:

- "Open rate looks normal, but delivered volume is far below expected"

- "My campaign did not send to everyone"

- "Login works on desktop but not mobile"

Now you need to decide:

- category

- priority

- escalation

- owning team

Seems straightforward.

It's not.

Where prompting starts to break

The first version looks simple:

Then you add examples. Then more. Then edge cases.

Before long, your prompt starts to look less like a prompt and more like a knowledge base.

And still:

- outputs drift

- categories blur

- formatting breaks

- edge cases pile up

It works, but not reliably enough to trust. So you start patching it.

You compensate:

- longer prompts

- more examples

- more guardrails

At some point, you're not building a system anymore. You're babysitting one.

The shift: from capability to consistency

This is where the mental model changes.

- Prompting gives you capability

- Fine-tuning gives you consistency at scale

Fine-tuning isn't about making the model smarter.

It's about removing variability.

You're taking a task the model can already do and making it behave the same way every time.

The dataset

This is the real work.

If you have been running a system like this, you already have the data. That is the part most people miss.

A simple example:

That's it. Input to output. No clever prompting required.

The quality of your fine-tuned model is almost entirely determined by how consistent this data is.

Why fine-tuning wins here

Once trained, the behavior shifts in a few important ways:

- Consistency: same input, same output structure, every time

- Lower latency: no massive prompts means faster responses

- Lower cost: fewer tokens per request

- Simpler system design: no giant prompt to maintain or debug

You stop fighting the model. It becomes predictable. It starts behaving like a component in your system.

This is not about tickets

This is where people either get it or miss it entirely.

Support tickets are just one example of a broader category:

- classifying leads

- tagging product feedback

- routing internal requests

- labeling logs or errors

- deciding which variant to serve in a personalization system

Different domains. Same underlying problem.

Same pattern:

- high volume

- repeatable decisions

- structured outputs

That's where fine-tuning earns its keep.

When you should not fine-tune

Most use cases don't need fine-tuning.

Do not fine-tune if:

- the task is low volume

- your categories are unclear or constantly changing

- you don't have clean, consistent examples

- you are still figuring out the problem

In those cases, prompting is the better tool.

The bigger shift

What's happening here is subtle.

We're moving from:

"How do I prompt the model to do this?"

To:

"How do I make this behavior reliable inside a system?"

That's a different mindset. Less about clever prompts. More about designing repeatable outcomes.

Fine-tuning is not magic. It is just pattern recognition over examples you already have.

But when you apply it to the right class of problems, the ones that show up thousands of times a day, it stops feeling like a demo...

...and starts behaving like infrastructure.